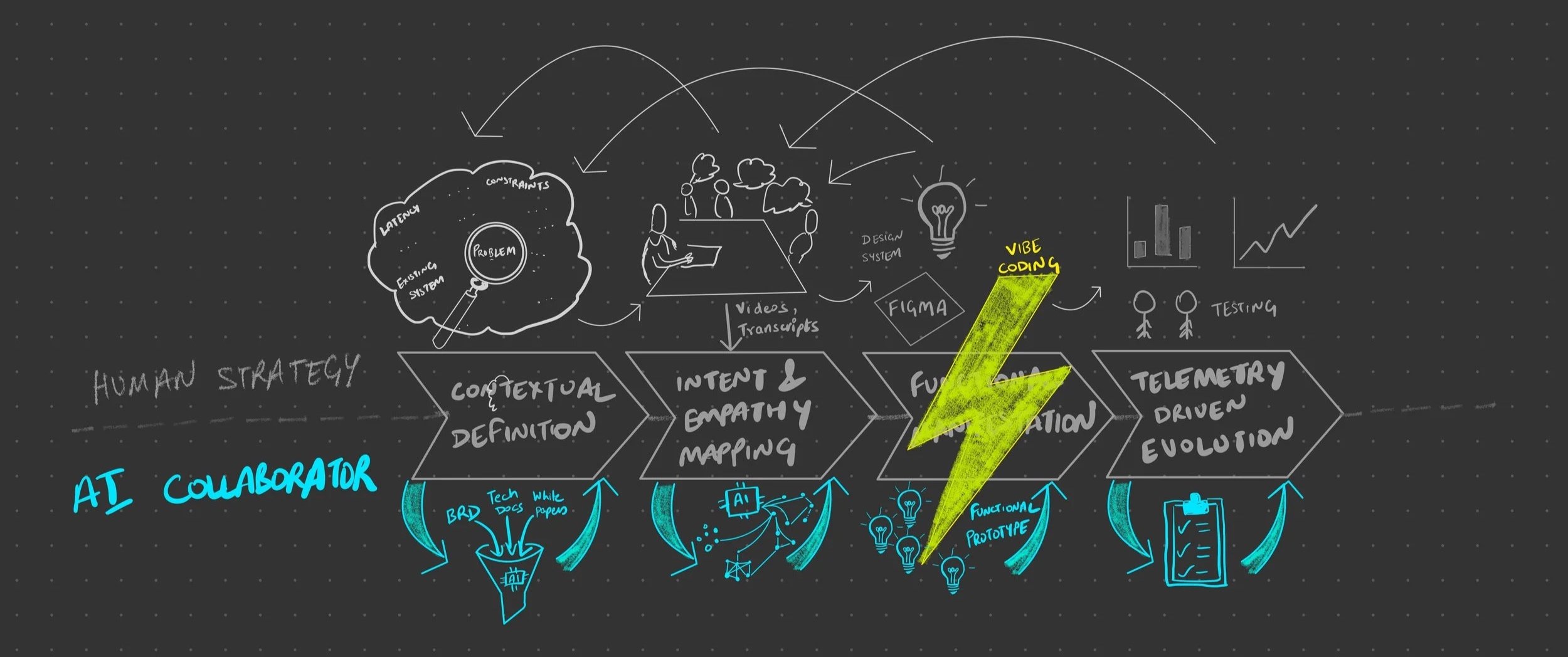

How I work : Research-led, AI amplified

My process is built on a simple belief: AI should amplify human judgment, not replace it. I bring the curiosity, the empathy, and the cross-functional relationships - AI helps me move faster, synthesize deeper, and build sooner. The result is a loop that gets from raw problem to validated solution in a fraction of the time, without sacrificing the human signal that makes design meaningful.

Phase 1(a): Contextual Definition

Building the Technical Foundation - with Engineers, Not Just Specs

Every project starts with getting the technical reality right - not just reading specs, but sitting with engineers to understand the constraints they live with. I run collaborative sessions with engineering and product partners to surface the "unknown unknowns" that hide in complex distributed systems: latency boundaries, reasoning limitations, privacy guardrails, failure modes.

Once I have that foundation, I feed the full technical context - documentation, engineering notes, constraint discussions - into AI to synthesize it into a focused set of design guardrails. This isn't passive summarization. I'm looking for the constraints that will most directly affect user experience, and using AI to stress-test whether I've found them all.

Collaborative constraint mapping: I work directly with engineers and PMs to define the sandbox before a single design decision is made. The technical constraints aren't a handoff document — they're a shared foundation.

AI-assisted gap detection: I use AI to find the failure modes and boundary conditions that will show up in production but not in specs.

Phase 1(b): Intent and Empathy Mapping

Deep User Research - I Bring the Empathy, AI Brings the Scale.

This is where I do the human work that AI can't do for me. I conduct the interviews. I ask the follow-up questions. I notice when someone hesitates. After sessions, I feed the full transcript corpus - interviews, support tickets, forum data - into AI to find patterns I couldn't manually process. That synthesized intelligence becomes the foundation for Personas, Jobs to be Done, and Day-in-the-Life scenarios - which I build collaboratively with PMs and engineers, not in isolation.

Human-first interviews: I conduct the interviews. I ask the follow-up questions. I notice when someone hesitates. AI helps me process what I've collected - it doesn't replace the conversation.

Collaborative frameworks: Personas and JTBD aren't AI outputs I present to the team - they're starting points I build with PMs and engineers so the whole cross-functional team owns the customer understanding.

Phase 2: Functional Manifestation

From Insight to Working Prototype : Bypassing static mocks for faster alignment

This is where my engineering background becomes a superpower. Rather than spending weeks building static mocks, I compile everything from Phases 1a and 1b - technical constraints, user mental models, edge cases, personas - into a structured context file. That context file feeds directly into my vibe coding environment (Kiro, VSCode), producing a functional prototype that handles real state changes, real error conditions, and real latency from day one.

From there, iteration is fast. I share prototypes with PMs early, run sessions with real customers, and fold feedback back into the context file for the next cycle.

Context-file-driven development: I compile technical constraints, user insights, and edge cases into a structured context file that I feed into Kiro or similar tools - ensuring the prototype reflects the full system complexity, not just the happy path.

Leadership alignment without translation: Leaders interact with real behavior, not a description of it - which means faster, higher-quality decisions.

Iterative loops with real users and PMs: Prototypes go in front of customers early and often. Feedback feeds back into the context file. Each iteration is informed by real behavior, not assumptions.

Phase 3: Telemetry driven evolution

Closing the loop with data to ensure design decisions move the needle.

Before launch, I define success metrics with PMs and engineering - task completion, error rates, sentiment signals - so we know what we're measuring from day one. After launch, I use AI to process telemetry and user feedback at scale, surfacing patterns faster than manual analysis allows. Then I stay in conversation with customers, because metrics tell you what broke and users tell you why. Those insights feed back into the next context file and close the loop.

Those insights close the loop - feeding back into the next context file, informing the next prototype, shaping the next roadmap conversation.

Pre-launch metric definition: I define success metrics with PMs and engineering before launch, not after - so we know exactly what we're measuring and why from day one.

AI-powered telemetry analysis: Patterns and anomalies surface faster with AI processing the data.

Continuous customer conversation: Metrics tell you what broke. Customers tell you why. I stay close to both, using each to interrogate the other.